Крутая настройка для самых продвинутых | Wandb\n",

"\n",

"При желании можно подключить библиотеку [Weights&Biases](https://wandb.ai/site) - бесплатный трекер экспериментов. И файнтюннить по взрослому."

]

},

{

"cell_type": "code",

"execution_count": 7,

"metadata": {},

"outputs": [],

"source": [

"# import wandb\n",

"\n",

"# wb_token = 'WANDB_API_KEY' # ключ c сайта Weights & Biases https://wandb.ai/site\n",

"# wandb.login(key=wb_token)\n",

"# далее раскомментируйте параметр record_to"

]

},

{

"cell_type": "code",

"execution_count": 11,

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "95_Nn-89DhsL",

"outputId": "476f105b-b374-4861-eec5-88b0f3c859a6"

},

"outputs": [

{

"name": "stderr",

"output_type": "stream",

"text": [

"max_steps is given, it will override any value given in num_train_epochs\n"

]

}

],

"source": [

"from trl import SFTTrainer\n",

"from transformers import TrainingArguments\n",

"from unsloth import is_bfloat16_supported\n",

"\n",

"trainer = SFTTrainer(\n",

" model = model,\n",

" tokenizer = tokenizer,\n",

" train_dataset = dataset,\n",

" dataset_text_field = \"text\",\n",

" max_seq_length = max_seq_length,\n",

" dataset_num_proc = 2,\n",

" packing = False, # Can make training 5x faster for short sequences.\n",

" args = TrainingArguments(\n",

" per_device_train_batch_size = 4,\n",

" gradient_accumulation_steps = 2,\n",

" warmup_steps = 10,\n",

" #num_train_epochs = 1, # Set this for 1 full training run.\n",

" max_steps = 60,\n",

" learning_rate = 2e-4,\n",

" fp16 = not is_bfloat16_supported(),\n",

" bf16 = is_bfloat16_supported(),\n",

" logging_steps = 1,\n",

" optim = \"adamw_8bit\",\n",

" weight_decay = 0.01,\n",

" lr_scheduler_type = \"linear\",\n",

" seed = 3407,\n",

" output_dir = \"outputs\",\n",

" # report_to=\"wandb\", # Если используете Weights & Biases\n",

" ),\n",

")"

]

},

{

"cell_type": "code",

"execution_count": 14,

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "2ejIt2xSNKKp",

"outputId": "c4b07c62-3201-4a90-f024-560a86785d23"

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"GPU = Tesla T4. Max memory = 14.748 GB.\n",

"12.742 GB of memory reserved.\n"

]

}

],

"source": [

"#@title Show current memory stats\n",

"gpu_stats = torch.cuda.get_device_properties(0)\n",

"start_gpu_memory = round(torch.cuda.max_memory_reserved() / 1024 / 1024 / 1024, 3)\n",

"max_memory = round(gpu_stats.total_memory / 1024 / 1024 / 1024, 3)\n",

"\n",

"print(f\"GPU = {gpu_stats.name}. Max memory = {max_memory} GB.\")\n",

"print(f\"{start_gpu_memory} GB of memory reserved.\")"

]

},

{

"cell_type": "code",

"execution_count": 12,

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/",

"height": 1000

},

"id": "yqxqAZ7KJ4oL",

"outputId": "bbe9d498-00da-414d-99ad-14388acf1014"

},

"outputs": [

{

"name": "stderr",

"output_type": "stream",

"text": [

"==((====))== Unsloth - 2x faster free finetuning | Num GPUs = 1\n",

" \\\\ /| Num examples = 587 | Num Epochs = 4\n",

"O^O/ \\_/ \\ Batch size per device = 8 | Gradient Accumulation steps = 4\n",

"\\ / Total batch size = 32 | Total steps = 60\n",

" \"-____-\" Number of trainable parameters = 41,943,040\n"

]

},

{

"data": {

"text/html": [

"\n",

" \n",

" \n",

"

\n",

" [60/60 43:35, Epoch 3/4]\n",

"

\n",

" \n",

" \n",

" | Step | \n",

" Training Loss | \n",

"

\n",

" \n",

" \n",

" \n",

" | 1 | \n",

" 2.236400 | \n",

"

\n",

" \n",

" | 2 | \n",

" 2.247700 | \n",

"

\n",

" \n",

" | 3 | \n",

" 2.328900 | \n",

"

\n",

" \n",

" | 4 | \n",

" 2.165200 | \n",

"

\n",

" \n",

" | 5 | \n",

" 2.294000 | \n",

"

\n",

" \n",

" | 6 | \n",

" 2.186900 | \n",

"

\n",

" \n",

" | 7 | \n",

" 2.269100 | \n",

"

\n",

" \n",

" | 8 | \n",

" 2.214700 | \n",

"

\n",

" \n",

" | 9 | \n",

" 2.289900 | \n",

"

\n",

" \n",

" | 10 | \n",

" 2.298600 | \n",

"

\n",

" \n",

" | 11 | \n",

" 2.244600 | \n",

"

\n",

" \n",

" | 12 | \n",

" 2.201800 | \n",

"

\n",

" \n",

" | 13 | \n",

" 2.153100 | \n",

"

\n",

" \n",

" | 14 | \n",

" 2.188300 | \n",

"

\n",

" \n",

" | 15 | \n",

" 2.166900 | \n",

"

\n",

" \n",

" | 16 | \n",

" 2.182000 | \n",

"

\n",

" \n",

" | 17 | \n",

" 2.188200 | \n",

"

\n",

" \n",

" | 18 | \n",

" 2.098000 | \n",

"

\n",

" \n",

" | 19 | \n",

" 2.084500 | \n",

"

\n",

" \n",

" | 20 | \n",

" 2.096200 | \n",

"

\n",

" \n",

" | 21 | \n",

" 2.201400 | \n",

"

\n",

" \n",

" | 22 | \n",

" 2.091800 | \n",

"

\n",

" \n",

" | 23 | \n",

" 2.053300 | \n",

"

\n",

" \n",

" | 24 | \n",

" 2.117500 | \n",

"

\n",

" \n",

" | 25 | \n",

" 2.018800 | \n",

"

\n",

" \n",

" | 26 | \n",

" 2.011700 | \n",

"

\n",

" \n",

" | 27 | \n",

" 1.953300 | \n",

"

\n",

" \n",

" | 28 | \n",

" 1.964100 | \n",

"

\n",

" \n",

" | 29 | \n",

" 2.048200 | \n",

"

\n",

" \n",

" | 30 | \n",

" 1.907600 | \n",

"

\n",

" \n",

" | 31 | \n",

" 2.030600 | \n",

"

\n",

" \n",

" | 32 | \n",

" 2.014700 | \n",

"

\n",

" \n",

" | 33 | \n",

" 1.982400 | \n",

"

\n",

" \n",

" | 34 | \n",

" 1.982400 | \n",

"

\n",

" \n",

" | 35 | \n",

" 1.898200 | \n",

"

\n",

" \n",

" | 36 | \n",

" 2.025500 | \n",

"

\n",

" \n",

" | 37 | \n",

" 1.779900 | \n",

"

\n",

" \n",

" | 38 | \n",

" 2.007600 | \n",

"

\n",

" \n",

" | 39 | \n",

" 1.968800 | \n",

"

\n",

" \n",

" | 40 | \n",

" 1.961200 | \n",

"

\n",

" \n",

" | 41 | \n",

" 1.911900 | \n",

"

\n",

" \n",

" | 42 | \n",

" 1.888400 | \n",

"

\n",

" \n",

" | 43 | \n",

" 1.826900 | \n",

"

\n",

" \n",

" | 44 | \n",

" 1.803100 | \n",

"

\n",

" \n",

" | 45 | \n",

" 1.816400 | \n",

"

\n",

" \n",

" | 46 | \n",

" 1.819600 | \n",

"

\n",

" \n",

" | 47 | \n",

" 1.691800 | \n",

"

\n",

" \n",

" | 48 | \n",

" 1.928200 | \n",

"

\n",

" \n",

" | 49 | \n",

" 1.881500 | \n",

"

\n",

" \n",

" | 50 | \n",

" 1.897900 | \n",

"

\n",

" \n",

" | 51 | \n",

" 1.940600 | \n",

"

\n",

" \n",

" | 52 | \n",

" 1.860200 | \n",

"

\n",

" \n",

" | 53 | \n",

" 1.859400 | \n",

"

\n",

" \n",

" | 54 | \n",

" 1.853800 | \n",

"

\n",

" \n",

" | 55 | \n",

" 1.901000 | \n",

"

\n",

" \n",

" | 56 | \n",

" 1.895100 | \n",

"

\n",

" \n",

" | 57 | \n",

" 1.795600 | \n",

"

\n",

" \n",

" | 58 | \n",

" 1.882400 | \n",

"

\n",

" \n",

" | 59 | \n",

" 1.795100 | \n",

"

\n",

" \n",

" | 60 | \n",

" 1.654000 | \n",

"

\n",

" \n",

"

"

],

"text/plain": [

""

]

},

"metadata": {},

"output_type": "display_data"

}

],

"source": [

"# Запускаем тренировку!\n",

"trainer_stats = trainer.train()"

]

},

{

"cell_type": "code",

"execution_count": 15,

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "pCqnaKmlO1U9",

"outputId": "7e351717-6f83-4794-dff1-633468bc3b3f"

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"2669.216 seconds used for training.\n",

"44.49 minutes used for training.\n",

"Peak reserved memory = 12.742 GB.\n",

"Peak reserved memory for training = 0.0 GB.\n",

"Peak reserved memory % of max memory = 86.398 %.\n",

"Peak reserved memory for training % of max memory = 0.0 %.\n"

]

}

],

"source": [

"#@title Show final memory and time stats\n",

"used_memory = round(torch.cuda.max_memory_reserved() / 1024 / 1024 / 1024, 3)\n",

"used_memory_for_lora = round(used_memory - start_gpu_memory, 3)\n",

"used_percentage = round(used_memory /max_memory*100, 3)\n",

"lora_percentage = round(used_memory_for_lora/max_memory*100, 3)\n",

"print(f\"{trainer_stats.metrics['train_runtime']} seconds used for training.\")\n",

"print(f\"{round(trainer_stats.metrics['train_runtime']/60, 2)} minutes used for training.\")\n",

"print(f\"Peak reserved memory = {used_memory} GB.\")\n",

"print(f\"Peak reserved memory for training = {used_memory_for_lora} GB.\")\n",

"print(f\"Peak reserved memory % of max memory = {used_percentage} %.\")\n",

"print(f\"Peak reserved memory for training % of max memory = {lora_percentage} %.\")"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "ekOmTR1hSNcr"

},

"source": [

"# Инференс 💬\n",

"Давайте запустим модель! Можете изменить инструкцию и ввод, Response оставим пустым!\n",

"\n",

"Попробовать вдвое ускорить инференс можно в Colab для **Llama-3.1 8b Instruct** [здесь](https://colab.research.google.com/drive/1T-YBVfnphoVc8E2E854qF3jdia2Ll2W2?usp=sharing)"

]

},

{

"cell_type": "code",

"execution_count": 17,

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "kR3gIAX-SM2q",

"outputId": "ec9e915d-ddd7-4249-d135-1676b26f6c69",

"scrolled": true

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"['<|begin_of_text|>Below is an instruction that describes a task, paired with '\n",

" 'an input that provides further context. Write a response that appropriately '\n",

" 'completes the request.\\n'\n",

" '\\n'\n",

" '### Instruction:\\n'\n",

" 'Write post about the following topic: \\n'\n",

" '\\n'\n",

" '### Input:\\n'\n",

" 'как запустить gen ai стартап\\n'\n",

" '\\n'\n",

" '### Response:\\n'\n",

" '🎤 Сегодня у меня был интересный опыт. \\n'\n",

" '\\n'\n",

" '🔝 Встретился с 2-мя ребятами, которые хотят запустить свой gen ai стартап. \\n'\n",

" '\\n'\n",

" '🏆 В целом, все было классно. У них крутой опыт, хороший тимбилдинг и '\n",

" 'интересная задумка. \\n'\n",

" '\\n'\n",

" '🤔 Однако, я все же посоветовал им не запускать стартап, а просто скинуть '\n",

" 'свою идею в нашу ML лотерею. \\n'\n",

" '\\n'\n",

" '🤔 Понятно, что это не совсем']\n"

]

}

],

"source": [

"# alpaca_prompt = Copied from above\n",

"FastLanguageModel.for_inference(model) # Enable native 2x faster inference\n",

"inputs = tokenizer(\n",

"[\n",

" alpaca_prompt.format(\n",

" \"Write post about the following topic: \", # instruction\n",

" \"как запустить gen ai стартап\", # input\n",

" \"\", # output - leave this blank for generation!\n",

" )\n",

"], return_tensors = \"pt\").to(\"cuda\")\n",

"\n",

"outputs = model.generate(**inputs, max_new_tokens = 128, use_cache = True)\n",

"pprint(tokenizer.batch_decode(outputs))"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"\n",

" \n",

"✅ После файнтюна модель по тому же запросу пишет пост, и явно угадывается стиль постов канала [Datafeeling](https://t.me/datafeeling)."

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "CrSvZObor0lY"

},

"source": [

" **Запуск генерации в режиме стриминга:** Вы также можете использовать `TextStreamer` для непрерывного инференса, чтобы вы могли видеть генерацию токен за токеном!"

]

},

{

"cell_type": "code",

"execution_count": 18,

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "e2pEuRb1r2Vg",

"outputId": "b289b319-ddff-430b-ff0e-d29a403ae59e"

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"<|begin_of_text|>Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.\n",

"\n",

"### Instruction:\n",

"Write post about the following topic: \n",

"\n",

"### Input:\n",

"Как стать kaggle master\n",

"\n",

"### Response:\n",

"🏆 Как стать kaggle master\n",

"\n",

"🤔 На Kaggle сейчас много мэтчей, в которых можно скинуть лидерборд. И это прекрасно, но вот только не все так просто. \n",

"\n",

"🤫 Если ты хочешь добиться успеха, то придется много потрудиться. В противном случае ты просто не добьешься успеха. И это нормально, не судите. \n",

"\n",

"🤔 Поэтому, если ты хочешь добиться успеха, то придется много потрудиться. И это нормально, не судите. \n",

"\n",

"�\n"

]

}

],

"source": [

"from transformers import TextStreamer\n",

"\n",

"FastLanguageModel.for_inference(model) # Enable native 2x faster inference\n",

"inputs = tokenizer(\n",

"[\n",

" alpaca_prompt.format(\n",

" \"Write post about the following topic: \", # instruction\n",

" \"Как стать kaggle master\", # input\n",

" \"\", # output - leave this blank for generation!\n",

" )\n",

"], return_tensors = \"pt\").to(\"cuda\")\n",

"\n",

"\n",

"text_streamer = TextStreamer(tokenizer)\n",

"_ = model.generate(**inputs, streamer = text_streamer, max_new_tokens = 128)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "uMuVrWbjAzhc"

},

"source": [

"###

Сохранение, загрузка файнтюненных моделей\n",

"Чтобы сохранить окончательную модель в качестве адаптеров `LoRA`, используйте:\n",

"* либо `push_to_hub` от **Huggingface** для онлайн-сохранения \n",

"* либо `save_pretrained` для локального сохранения\n",

"\n",

"**Важно:** сохраняется ТОЛЬКО адаптер `LoRA`, а не полная модель. Как сохранить в 16-битном формате или `GGUF` смотрите в [этом ноутбуке](https://colab.research.google.com/drive/1Ys44kVvmeZtnICzWz0xgpRnrIOjZAuxp?usp=sharing)!"

]

},

{

"cell_type": "code",

"execution_count": 4,

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "3Pt-g2YquOa8",

"outputId": "5ff05c43-7bb8-4c34-b3c9-35102ba254f5"

},

"outputs": [],

"source": [

"from getpass import getpass\n",

"\n",

"hf_token = getpass(prompt=\"Введите ваш HuggingFaceHub API ключ\")\n",

"hf_username = 'Ivanich' # Вводим свой username на HugginFace"

]

},

{

"cell_type": "code",

"execution_count": 23,

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/",

"height": 130,

"referenced_widgets": [

"497302a6acc84773a84ff23cd36da214",

"b62cf033432f40e0a9ee259515167b51",

"22eed99a28c74a80a42f95cc2529913b",

"1f07aa6d2b744092ac1b2f13352b3909",

"7a7468b6571c4b818f706b13e37345b5",

"d2117400d4744ac498d1582a3336f905",

"36e5a40703714c76ba41451af838a1fe",

"0374d3f515e640ea80e11cb8cffdbf7d",

"e08504a1906e41bf8cfe2e7d231e0f2f",

"1417bf853c6241038d6d01fae7183091",

"9d78a73117ff4b59b6f6499b7f978d14",

"297389dcbf3040f19fb6773f776f3f2e",

"1a8353acfc6e4a77b5e754ec3a9d164e",

"a6784a8092dd4652bb19a142b05986b1",

"74d5c798f06346e9bd1cf96bf72669ff",

"d937f93cbe12423d9198d740769eaaad",

"3d7de1da6dc5424bb29a8510b1cfc549",

"d9f595f07ea14377ad4f2af81b3dc236",

"3267638f39f34833955764925bc59ad9",

"7a23197e84e449a6b77b893f4bcc81fb",

"6747454af34a467690d783bae764fbfa",

"d6ef70581b8841d4b7455f1074373478",

"177ec807de33414281f03b5790021dc8",

"a1242cc96bf9450abbbc00593bc7c8a8",

"906abe2734de4a7bbceb71fefb265ae8",

"de7e5ca6727c4c0d99522d35981a4ad2",

"7ca294a7b4aa40668f8ea154f744a4b4",

"d27389522e9c437695f0e93a0ecf1f09",

"16b0f13d883d48809d4703704f43204c",

"2c90127799134934b6eb68325838a8a8",

"c0a6e820a2eb41dc9c6244f614272ef5",

"a4a6ed211ca44d509cb82311c27caba3",

"c7a2e8fad27e4fa2b9c80a970002d6bd"

]

},

"id": "upcOlWe7A1vc",

"outputId": "64f23760-f101-4ef6-e7f8-384eec50db9d"

},

"outputs": [

{

"data": {

"application/vnd.jupyter.widget-view+json": {

"model_id": "497302a6acc84773a84ff23cd36da214",

"version_major": 2,

"version_minor": 0

},

"text/plain": [

"README.md: 0%| | 0.00/588 [00:00\n",

" \n",

"Если перейти по ссылке, которую выдает HF после загрузки на хаб - увидим, что сохранилась не вся модель, а только веса адаптера LoRA (168 Mb). "

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "AEEcJ4qfC7Lp"

},

"source": [

"Now if you want to load the LoRA adapters we just saved for inference, set `False` to `True`:"

]

},

{

"cell_type": "code",

"execution_count": null,

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "MKX_XKs_BNZR",

"outputId": "7431de93-bc80-48b7-9491-1f3ce12615fd"

},

"outputs": [

{

"data": {

"text/plain": [

"['<|begin_of_text|>Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.\\n\\n### Instruction:\\nWrite post about the following topic\\n\\n### Input:\\nРаспределение долей в стартапе\\n\\n### Response:\\nЯ думаю, что это очень важный вопрос: как распределять доли в стартапе? И, что еще важнее, как распределять доли в стартапе, когда вы еще не знаете, каким будет стартап? Очень много людей в стартапах, которые делают это по-старому, то есть, распределяют доли по статусу: founder, cofounder, employee, contractor, investor, etc. Это не так плохо, но, с другой стороны, я не знаю ни одного стартапа, который бы был успеш']"

]

},

"execution_count": 12,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"if False:\n",

" from unsloth import FastLanguageModel\n",

" model, tokenizer = FastLanguageModel.from_pretrained(\n",

" model_name = \"datafeeling_model\", # YOUR MODEL YOU USED FOR TRAINING\n",

" max_seq_length = max_seq_length,\n",

" dtype = dtype,\n",

" load_in_4bit = load_in_4bit,\n",

" )\n",

" FastLanguageModel.for_inference(model) # Enable native 2x faster inference\n",

"\n",

"# alpaca_prompt = You MUST copy from above!\n",

"\n",

"inputs = tokenizer(\n",

"[\n",

" alpaca_prompt.format(\n",

" \"Write post about the following topic\", # instruction\n",

" \"Распределение долей в стартапе\", # input\n",

" \"\", # output - leave this blank for generation!\n",

" )\n",

"], return_tensors = \"pt\").to(\"cuda\")\n",

"\n",

"outputs = model.generate(**inputs, max_new_tokens = 128, use_cache = True)\n",

"tokenizer.batch_decode(outputs)"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"✅ Отлично, видим, что результат получился похожим на правду"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# А что ещё из полезного?\n",

"\n",

"\n",

" \n",

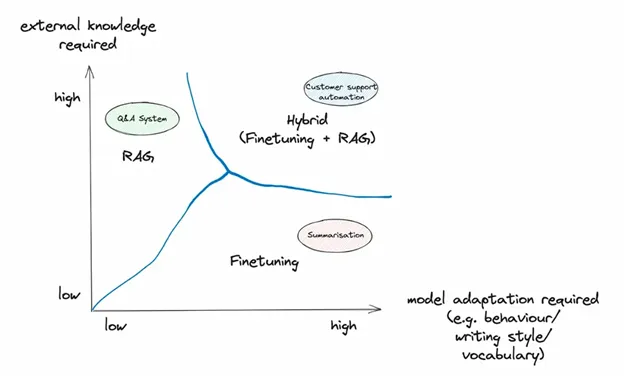

"* [RAFT](https://arxiv.org/abs/2403.10131) - RAG + Fine Tuning - файнтюнят модель, чтобы отбирать документы для RAG\n",

"* [Finetuning ChatGPT](https://platform.openai.com/docs/guides/fine-tuning) - OpenAI и Anthropic объявили, что можно будет файнтюнить их модели на своих данных, а потом использовать эти версии моделей по API (платно).\n",

"* [Датасет](https://huggingface.co/datasets/Ivanich/datafeeling_posts) с постами канала Datafeeling, собранный в этом [ноутбуке](https://github.com/a-milenkin/LLM_practical_course/blob/main/notebooks/M5_2_Dataset_prepare.ipynb).\n",

"* Пример файнтюнинга на готовом датасете с HuggingFace c медицинскими данными. Оформили в виде [статьи на Хабр](https://habr.com/ru/articles/832984/)."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"#

🧸 Выводы и заключения ✅\n",

"\n",

"**Плюсы FT:**\n",

"* Более консистентные результаты генрации (например, можно передать стилистику автора)\n",

"* Уменьшение галлюцинаций (следование новым доменным знаниями)\n",

"* Можно натренировать под специфический юзкейс\n",

"* Не забывает данные (хранятся в весах модели)\n",

"* Можно передать больше данных, чем в RAG (т.к. нет ограничений контекстного окна)\n",

"* Можно использовать небольшие модели - меньше затрат на дальнейшее использование\n",

"* Приватность - данные не передаются открыто в промпте, а \"зашиты\" в весах (меньше возможностей для промпт-хакинга)\n",

"* Выше скорость инференса по сравнению с RAG (т.к. не нужно делать семантический поиск по базе и короче промпт, быстрее начинается генерация)\n",

"\n",

"**Минусы FT:**\n",

"* Нужно собрать гораздо больше данных (самая трудоемкая и важная часть)\n",

"* Повышенный порог входа - нужны более глубокие технические знания\n",

"* Нужно больше ресурсов и времени на старте\n",

"* Необходимость переучивать при выходе новых версий модели\n",

"* Иногда все равно придется использовать RAG для доступа к новой часто изменяющейся информации"

]

},

{

"cell_type": "code",

"execution_count": null,

"metadata": {},

"outputs": [],

"source": []

}

],

"metadata": {

"accelerator": "GPU",

"colab": {

"gpuType": "T4",

"provenance": []

},

"kernelspec": {

"display_name": "cv",

"language": "python",

"name": "python3"

},

"language_info": {

"codemirror_mode": {

"name": "ipython",

"version": 3

},

"file_extension": ".py",

"mimetype": "text/x-python",

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

"version": "3.10.12"

},

"widgets": {

"application/vnd.jupyter.widget-state+json": {

"0374d3f515e640ea80e11cb8cffdbf7d": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"1417bf853c6241038d6d01fae7183091": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"16b0f13d883d48809d4703704f43204c": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "DescriptionStyleModel",

"state": {

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "DescriptionStyleModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "StyleView",

"description_width": ""

}

},

"177ec807de33414281f03b5790021dc8": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "HBoxModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "HBoxModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "HBoxView",

"box_style": "",

"children": [

"IPY_MODEL_a1242cc96bf9450abbbc00593bc7c8a8",

"IPY_MODEL_906abe2734de4a7bbceb71fefb265ae8",

"IPY_MODEL_de7e5ca6727c4c0d99522d35981a4ad2"

],

"layout": "IPY_MODEL_7ca294a7b4aa40668f8ea154f744a4b4"

}

},

"1a8353acfc6e4a77b5e754ec3a9d164e": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "HTMLModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "HTMLModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "HTMLView",

"description": "",

"description_tooltip": null,

"layout": "IPY_MODEL_3d7de1da6dc5424bb29a8510b1cfc549",

"placeholder": "",

"style": "IPY_MODEL_d9f595f07ea14377ad4f2af81b3dc236",

"value": "100%"

}

},

"1f07aa6d2b744092ac1b2f13352b3909": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "HTMLModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "HTMLModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "HTMLView",

"description": "",

"description_tooltip": null,

"layout": "IPY_MODEL_1417bf853c6241038d6d01fae7183091",

"placeholder": "",

"style": "IPY_MODEL_9d78a73117ff4b59b6f6499b7f978d14",

"value": " 588/588 [00:00<00:00, 33.5kB/s]"

}

},

"22eed99a28c74a80a42f95cc2529913b": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "FloatProgressModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "FloatProgressModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "ProgressView",

"bar_style": "success",

"description": "",

"description_tooltip": null,

"layout": "IPY_MODEL_0374d3f515e640ea80e11cb8cffdbf7d",

"max": 588,

"min": 0,

"orientation": "horizontal",

"style": "IPY_MODEL_e08504a1906e41bf8cfe2e7d231e0f2f",

"value": 588

}

},

"297389dcbf3040f19fb6773f776f3f2e": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "HBoxModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "HBoxModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "HBoxView",

"box_style": "",

"children": [

"IPY_MODEL_1a8353acfc6e4a77b5e754ec3a9d164e",

"IPY_MODEL_a6784a8092dd4652bb19a142b05986b1",

"IPY_MODEL_74d5c798f06346e9bd1cf96bf72669ff"

],

"layout": "IPY_MODEL_d937f93cbe12423d9198d740769eaaad"

}

},

"2c90127799134934b6eb68325838a8a8": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"3267638f39f34833955764925bc59ad9": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"36e5a40703714c76ba41451af838a1fe": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "DescriptionStyleModel",

"state": {

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "DescriptionStyleModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "StyleView",

"description_width": ""

}

},

"3d7de1da6dc5424bb29a8510b1cfc549": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"497302a6acc84773a84ff23cd36da214": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "HBoxModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "HBoxModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "HBoxView",

"box_style": "",

"children": [

"IPY_MODEL_b62cf033432f40e0a9ee259515167b51",

"IPY_MODEL_22eed99a28c74a80a42f95cc2529913b",

"IPY_MODEL_1f07aa6d2b744092ac1b2f13352b3909"

],

"layout": "IPY_MODEL_7a7468b6571c4b818f706b13e37345b5"

}

},

"6747454af34a467690d783bae764fbfa": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"74d5c798f06346e9bd1cf96bf72669ff": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "HTMLModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "HTMLModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "HTMLView",

"description": "",

"description_tooltip": null,

"layout": "IPY_MODEL_6747454af34a467690d783bae764fbfa",

"placeholder": "",

"style": "IPY_MODEL_d6ef70581b8841d4b7455f1074373478",

"value": " 1/1 [00:02<00:00, 2.84s/it]"

}

},

"7a23197e84e449a6b77b893f4bcc81fb": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "ProgressStyleModel",

"state": {

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "ProgressStyleModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "StyleView",

"bar_color": null,

"description_width": ""

}

},

"7a7468b6571c4b818f706b13e37345b5": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"7ca294a7b4aa40668f8ea154f744a4b4": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"906abe2734de4a7bbceb71fefb265ae8": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "FloatProgressModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "FloatProgressModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "ProgressView",

"bar_style": "success",

"description": "",

"description_tooltip": null,

"layout": "IPY_MODEL_2c90127799134934b6eb68325838a8a8",

"max": 167832240,

"min": 0,

"orientation": "horizontal",

"style": "IPY_MODEL_c0a6e820a2eb41dc9c6244f614272ef5",

"value": 167832240

}

},

"9d78a73117ff4b59b6f6499b7f978d14": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "DescriptionStyleModel",

"state": {

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "DescriptionStyleModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "StyleView",

"description_width": ""

}

},

"a1242cc96bf9450abbbc00593bc7c8a8": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "HTMLModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "HTMLModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "HTMLView",

"description": "",

"description_tooltip": null,

"layout": "IPY_MODEL_d27389522e9c437695f0e93a0ecf1f09",

"placeholder": "",

"style": "IPY_MODEL_16b0f13d883d48809d4703704f43204c",

"value": "adapter_model.safetensors: "

}

},

"a4a6ed211ca44d509cb82311c27caba3": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"a6784a8092dd4652bb19a142b05986b1": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "FloatProgressModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "FloatProgressModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "ProgressView",

"bar_style": "success",

"description": "",

"description_tooltip": null,

"layout": "IPY_MODEL_3267638f39f34833955764925bc59ad9",

"max": 1,

"min": 0,

"orientation": "horizontal",

"style": "IPY_MODEL_7a23197e84e449a6b77b893f4bcc81fb",

"value": 1

}

},

"b62cf033432f40e0a9ee259515167b51": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "HTMLModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "HTMLModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "HTMLView",

"description": "",

"description_tooltip": null,

"layout": "IPY_MODEL_d2117400d4744ac498d1582a3336f905",

"placeholder": "",

"style": "IPY_MODEL_36e5a40703714c76ba41451af838a1fe",

"value": "README.md: 100%"

}

},

"c0a6e820a2eb41dc9c6244f614272ef5": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "ProgressStyleModel",

"state": {

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "ProgressStyleModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "StyleView",

"bar_color": null,

"description_width": ""

}

},

"c7a2e8fad27e4fa2b9c80a970002d6bd": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "DescriptionStyleModel",

"state": {

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "DescriptionStyleModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "StyleView",

"description_width": ""

}

},

"d2117400d4744ac498d1582a3336f905": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"d27389522e9c437695f0e93a0ecf1f09": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"d6ef70581b8841d4b7455f1074373478": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "DescriptionStyleModel",

"state": {

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "DescriptionStyleModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "StyleView",

"description_width": ""

}

},

"d937f93cbe12423d9198d740769eaaad": {

"model_module": "@jupyter-widgets/base",

"model_module_version": "1.2.0",

"model_name": "LayoutModel",

"state": {

"_model_module": "@jupyter-widgets/base",

"_model_module_version": "1.2.0",

"_model_name": "LayoutModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "LayoutView",

"align_content": null,

"align_items": null,

"align_self": null,

"border": null,

"bottom": null,

"display": null,

"flex": null,

"flex_flow": null,

"grid_area": null,

"grid_auto_columns": null,

"grid_auto_flow": null,

"grid_auto_rows": null,

"grid_column": null,

"grid_gap": null,

"grid_row": null,

"grid_template_areas": null,

"grid_template_columns": null,

"grid_template_rows": null,

"height": null,

"justify_content": null,

"justify_items": null,

"left": null,

"margin": null,

"max_height": null,

"max_width": null,

"min_height": null,

"min_width": null,

"object_fit": null,

"object_position": null,

"order": null,

"overflow": null,

"overflow_x": null,

"overflow_y": null,

"padding": null,

"right": null,

"top": null,

"visibility": null,

"width": null

}

},

"d9f595f07ea14377ad4f2af81b3dc236": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "DescriptionStyleModel",

"state": {

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "DescriptionStyleModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "StyleView",

"description_width": ""

}

},

"de7e5ca6727c4c0d99522d35981a4ad2": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "HTMLModel",

"state": {

"_dom_classes": [],

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "HTMLModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/controls",

"_view_module_version": "1.5.0",

"_view_name": "HTMLView",

"description": "",

"description_tooltip": null,

"layout": "IPY_MODEL_a4a6ed211ca44d509cb82311c27caba3",

"placeholder": "",

"style": "IPY_MODEL_c7a2e8fad27e4fa2b9c80a970002d6bd",

"value": " 176M/? [00:02<00:00, 82.4MB/s]"

}

},

"e08504a1906e41bf8cfe2e7d231e0f2f": {

"model_module": "@jupyter-widgets/controls",

"model_module_version": "1.5.0",

"model_name": "ProgressStyleModel",

"state": {

"_model_module": "@jupyter-widgets/controls",

"_model_module_version": "1.5.0",

"_model_name": "ProgressStyleModel",

"_view_count": null,

"_view_module": "@jupyter-widgets/base",

"_view_module_version": "1.2.0",

"_view_name": "StyleView",

"bar_color": null,

"description_width": ""

}

}

}

}

},

"nbformat": 4,

"nbformat_minor": 4

}

\n",

"\n",

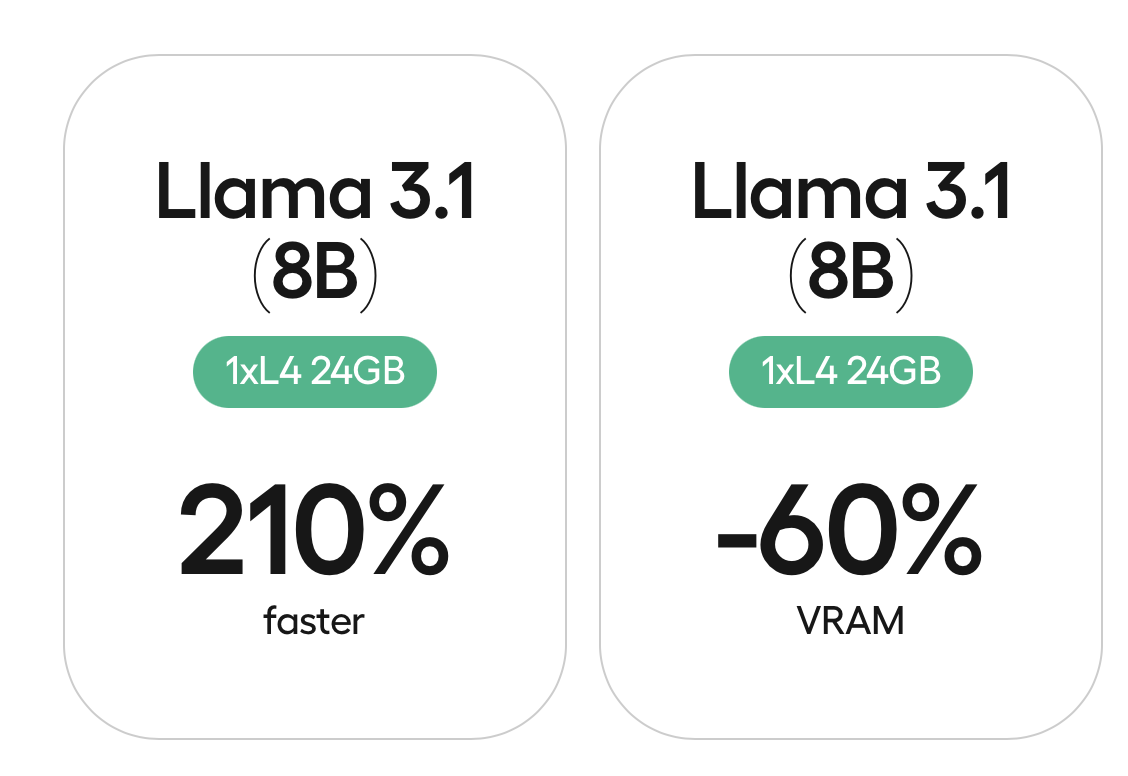

"* Чтобы запустить процесс файнтюнинга на ресурсах Google Colab и не ждать вечность.

\n",

"\n",

"* Чтобы запустить процесс файнтюнинга на ресурсах Google Colab и не ждать вечность.  \n",

"\n",

"* 🎲 Разберемся, зачем и когда использовать файнтюнинг (fine-tuning)\n",

"* [🤹♀️ Научимся готовить датасеты для файнтюнинга](#part2)\n",

"* [🚀 Поймем, как осуществить дообучение с помощью фрэймворка [unsloth](https://github.com/unslothai/unsloth)](#part3)\n",

"* [📦👩💻 Освоим техники эффективного фантюна (**Peft**, **Lora**, **QLora** и прочие неприличные аббревиатуры)](#part4)\n",

"* [📦 Произведем инференс и правильное сохранение дообученной модели ](#part4)\n",

"* 🥊 [Разберём файнтюнинг на примере 2-х датасетов:](#part6)\n",

" * Соберать свой\n",

" * или взять готовый датасет с HF [статья на Хабр](https://habr.com/ru/articles/832984/)\n",

"* [🧸 Выводы и заключения ✅](#part6)\n",

"\n",

" "

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"

\n",

"\n",

"* 🎲 Разберемся, зачем и когда использовать файнтюнинг (fine-tuning)\n",

"* [🤹♀️ Научимся готовить датасеты для файнтюнинга](#part2)\n",

"* [🚀 Поймем, как осуществить дообучение с помощью фрэймворка [unsloth](https://github.com/unslothai/unsloth)](#part3)\n",

"* [📦👩💻 Освоим техники эффективного фантюна (**Peft**, **Lora**, **QLora** и прочие неприличные аббревиатуры)](#part4)\n",

"* [📦 Произведем инференс и правильное сохранение дообученной модели ](#part4)\n",

"* 🥊 [Разберём файнтюнинг на примере 2-х датасетов:](#part6)\n",

" * Соберать свой\n",

" * или взять готовый датасет с HF [статья на Хабр](https://habr.com/ru/articles/832984/)\n",

"* [🧸 Выводы и заключения ✅](#part6)\n",

"\n",

" "

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"